The blue light doesn't just illuminate a room. It vibrates. In the quiet hours of a Tuesday morning, long after the rest of the house has drifted into the soft rhythmic breathing of sleep, that light pulses against the walls of a teenager’s bedroom. It is a silent, flickering heartbeat. On the other side of that glass is a mechanism so finely tuned that it makes a Swiss watch look like a pile of rusted gears. It is an algorithm designed to do one thing: keep the eyes fixed.

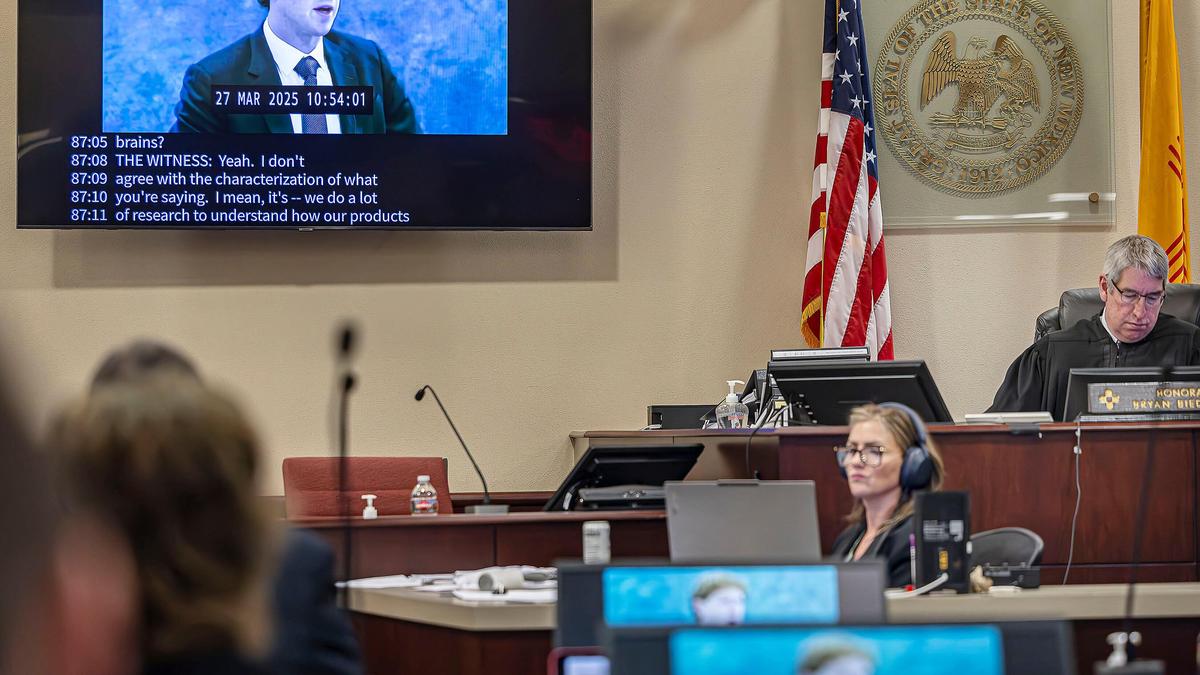

We often talk about social media as a "platform" or a "tool." We use architectural language to describe what is, in reality, a psychological deep-sea fishing expedition. Recently, a jury looked at the internal blueprints of this architecture and decided that the foundation wasn't just shaky. It was built on the deliberate exploitation of a developing brain’s inability to say "no."

The verdict against Meta wasn't a sudden bolt of lightning. It was the culmination of a slow, agonizing leak of internal documents that confirmed what parents had been whispering in doctors' offices for a decade. The company knew. They didn't just suspect that their products were linked to a spike in depression, anxiety, and body dysmorphia among minors. They had the data. They had the spreadsheets. And, according to the legal findings, they chose the graph that went up and to the right over the wellbeing of the children fueling that growth.

Consider a hypothetical teenager named Maya. She is fourteen. Her prefrontal cortex—the part of the brain responsible for impulse control and long-term planning—is still under construction. It won't be finished for another decade. When Maya scrolls through a feed that has been curated by a multi-billion-dollar processor, she isn't "choosing" content. She is reacting to a dopamine loop.

Every like is a hit. Every ignored post is a micro-rejection.

Imagine a specialized laboratory where the brightest minds in behavioral science are tasked with finding the exact frequency of notification that prevents a human from putting a device down. Now, imagine that laboratory has a direct, unmonitored line into the pocket of every middle schooler in the country. This isn't a metaphor for the industry; it is the industry’s literal business model.

The jury's determination centered on a devastating premise: Meta knowingly harmed children's mental health. This isn't a case of "unintended consequences" or a "learning curve." The evidence suggested a cold, calculated prioritization of engagement metrics over safety protocols. When internal researchers flagged that Instagram was "toxic" for a significant percentage of teen girls, the response wasn't to dismantle the features causing the harm. The response was to figure out how to keep them on the app longer.

Money has a way of silencing the conscience.

In the corporate boardrooms of Menlo Park, a "user" is a data point. But in a suburban kitchen, a user is a daughter who has stopped eating because her "Explore" page is an endless loop of filtered waists and "thinspo" content. A user is a son who can no longer focus on a book because his brain has been conditioned to crave a new stimulus every six seconds. These are the "invisible stakes" the headlines often miss. We aren't just talking about screen time. We are talking about the fundamental rewiring of human social interaction.

The defense often leans on the idea of "parental responsibility." It’s a seductive argument. If you don't want your kid on the app, take the phone away. But this ignores the reality of modern social survival. To take the phone away is to perform a social lobotomy on a child. They are vanished from the digital town square where every party is planned, every joke is shared, and every friendship is maintained.

The system was designed to be inescapable.

When a product is free, you are the product. We've heard that phrase so often it has become white noise. But for a child, the "product" being sold isn't just their data. It is their attention span, their self-image, and their mental stability. The jury saw the receipts. They saw the internal warnings that were buried and the safety teams that were underfunded while the engineering teams focused on "maximization" were given blank checks.

There is a specific kind of grief that comes with realizing a tragedy was preventable.

For years, the narrative was that kids were just "softer" or that the world was more stressful. We looked for every possible culprit—academic pressure, climate change, economic instability. While those factors are real, they don't explain the sharp, vertical "elbow" in the charts of youth suicide and self-harm that coincides almost perfectly with the mass adoption of the smartphone and the algorithmic feed.

The jury’s decision acts as a mirror held up to an industry that has long operated under the mantra of "move fast and break things." The problem is that the "things" being broken were people.

We are living through a massive, uncontrolled experiment on the human psyche. The results are coming in, and they are grim. The legal system is finally beginning to catch up to the technology, but laws move at the speed of paper while algorithms move at the speed of light.

The question that remains isn't just about liability or settlements. It’s about the value we place on the internal life of a child. If a toy company manufactured a doll that secretly whispered insults to children, it would be off the shelves in twenty-four hours. If a car company knew a seatbelt failed in 13% of crashes but sold it anyway to save a nickel, the executives would face criminal charges.

But when the product is digital, we have allowed ourselves to be gaslit into thinking the harm is imaginary.

The blue light continues to pulse in the dark. It is a silent thief, trading a child’s peace for a shareholder’s dividend. The verdict is a start, a crack in the armor of "inevitability" that Big Tech has worn for decades. But as the sun rises and millions of thumbs begin their morning scroll, the machine is already recalibrating. It is learning. It is optimizing.

The light never really goes out. It just waits for the next set of eyes.